Keynote Speakers

The following three speakers will give a keynote talk at ICICS 2022. All times are based on UK Summer Time (UTC+1).

Keynote I by Ross Anderson, University of Cambridge and University of Edinburgh, UK

Data and Time: Monday 5th September 2022 9-10am

Delivery Method: in-person

Title: Future Challenges in Security Engineering

![]()

Abstract: Now that we're putting software and network connections into durable safety-critical goods such as cars and medical devices, we have to patch vulnerabilities, just as we do with phones and laptops. The EU has responded with Directive 2019/771, which gives consumers the right to software updates for goods with digital elements, for the time period they might reasonably expect – which for durable goods like cars means typically ten years. In my talk I'll describe the background and the likely future effects. What tools should you use to write software for a car that will go on sale in 2023, if you have to support security patches and safety upgrades till 2043? The challenges may get even tougher as we start to incorporate components that use machine learning, as the data scientists who maintain large ML models don't have the patching culture that C programmers have. The costs of software maintenance look set to dominate more industries than at present, and to set practical limits to design complexity. This in turn will create opportunities for high-impact research over the next twenty years.

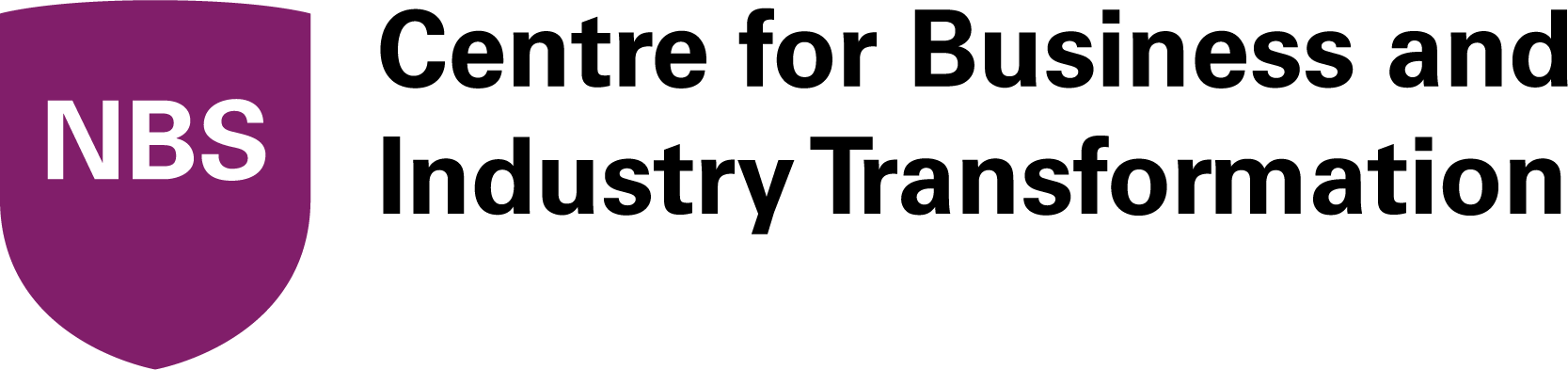

Bio: Ross Anderson is Professor of Security Engineering at Cambridge and Edinburgh Universities. His research has ranged over cryptography, hardware security, usability, payment systems, healthcare IT, adversarial machine learning, the economics of security and privacy, and the measurement of cybercrime and abuse. He is a Fellow of both the Royal Society and the Royal Academy of Engineering, and author of the textbook "Security Engineering – A Guide to Building Dependable Distributed Systems".

Keynote II by Nicholas Carlini, Google, USA

Data and Time: Tuesday 6th September 2022 2-3pm

Delivery Method: virtual (via Zoom)

Title: Underspecified Foundation Models Considered Harmful ![]()

Abstract: Instead of training neural networks to solve any one particular task, it is now common to train neural networks to behave as a “foundation” upon which future models can be built. Because these models train on unlabeled and uncurated datasets, their objective functions are necessarily underspecified and not easily controlled.

In this talk I argue that while training underspecified models at scale may benefit accuracy, it comes at a cost to their security. As evidence, I present two case studies in the domains of semi- and self-supervised learning where an adversary can poison the unlabeled training dataset to perform various attacks. Addressing these challenges will require new categories of defenses to simultaneously allow models to train on large datasets while also being robust to adversarial training data.

Bio: Nicholas Carlini is a research scientist at Google Brain. He studies the security and privacy of machine learning, and for this he has received best paper awards at ICML, USENIX Security and IEEE S&P. He obtained his PhD from the University of California, Berkeley in 2018.

Keynote III by Guang Gong, University of Waterloo, Canada

Data and Time: Wednesday 7th September 2022 3:30-4:30pm

Delivery Method: virtual (via Zoom)

Title: Toward Practical Privacy Protections for Blockchain Networks ![]()

Abstract: Permissionless and permissioned blockchain systems, which are decentralized and capable of transferring trusted consensus, computation, and immutable data between untrusted entities, have strong potential for numerous applications where value or data is transferred/shared, stored and processed. All existing blockchain privacy solutions employ advanced “cryptographic engines”, namely zero-knowledge proof verifiable computation schemes. Currently, the zero-knowledge Succinct Non-interactive Argument of Knowledge (zkSNARK) approach is the most promising, which is built on (fully) homomorphic encryption (e.g., GGPR13/Groth16 implemented in Zcash) or probabilistic check proofs (PCP) (e.g., the lightweight coin Ligero, Stark and Aurora). In this talk, I will first present an overview for the current research along this line, then I will provide a concrete example of zkSNARK schemes, namely, Polaris, our recent work, for Rank-1 Constraint Satisfaction (RICS) circuits. The verifier’s costs and the proof size are bounded by the square of logarithm of the size of an arithmetic circuit. It does not require a trusted setup and plausibly post-quantum secure when employed secure hash function is plausibly post-quantum resistant. For instance, for verifying a SHA-256 preimage (about 23k AND gates) in zero-knowledge with 128 bits security, the proof size is less than 150kB and the verification time is less than 11ms, both competitive to existing systems with better concrete verifiers' complexity.

Bio: Dr. Guang Gong is a professor in the Department of Electrical and Computer Engineering at University of Waterloo, Canada since 2004. Currently, her research focuses on pseudorandom generation, lightweight cryptography (LWC), IoT security, blockchain privacy and privacy preserving machine learning. She has authored or coauthored more than 360 technical papers, two books, and three patents. She serves/served as an Associate Editor for several journals including an Associate Editor for the IEEE Transactions on Information Theory (2005-2008, 2017-2018, 2020-), the IEEE Transactions on Dependable and Secure Computing (2021-), and the Journal of Cryptography and Communications (2007-), and has served on numerous technical program committees and conferences as the co-chair/organizer or committee member. Dr. Gong has received several awards, including the Premier's Research Excellence Award (2001), Ontario, Canada, Ontario Research Fund - Research Excellence Award (2010), IEEE Fellow (2014) for her contributions to sequences and cryptography applied to communications and security, and the University Research Chair (2018-2024). Dr. Gong's research is supported by government grant agencies as well as industrial grants.